Acquiring Text

LIS 4/5693: Information Retrieval and Text Mining

Introduction

Digital Trace Data

Strengths of Digital Trace Data

1. Always On

Strengths of Digital Trace Data (Cont.)

2. Non-Reactive

Strengths of Digital Trace Data (Cont.)

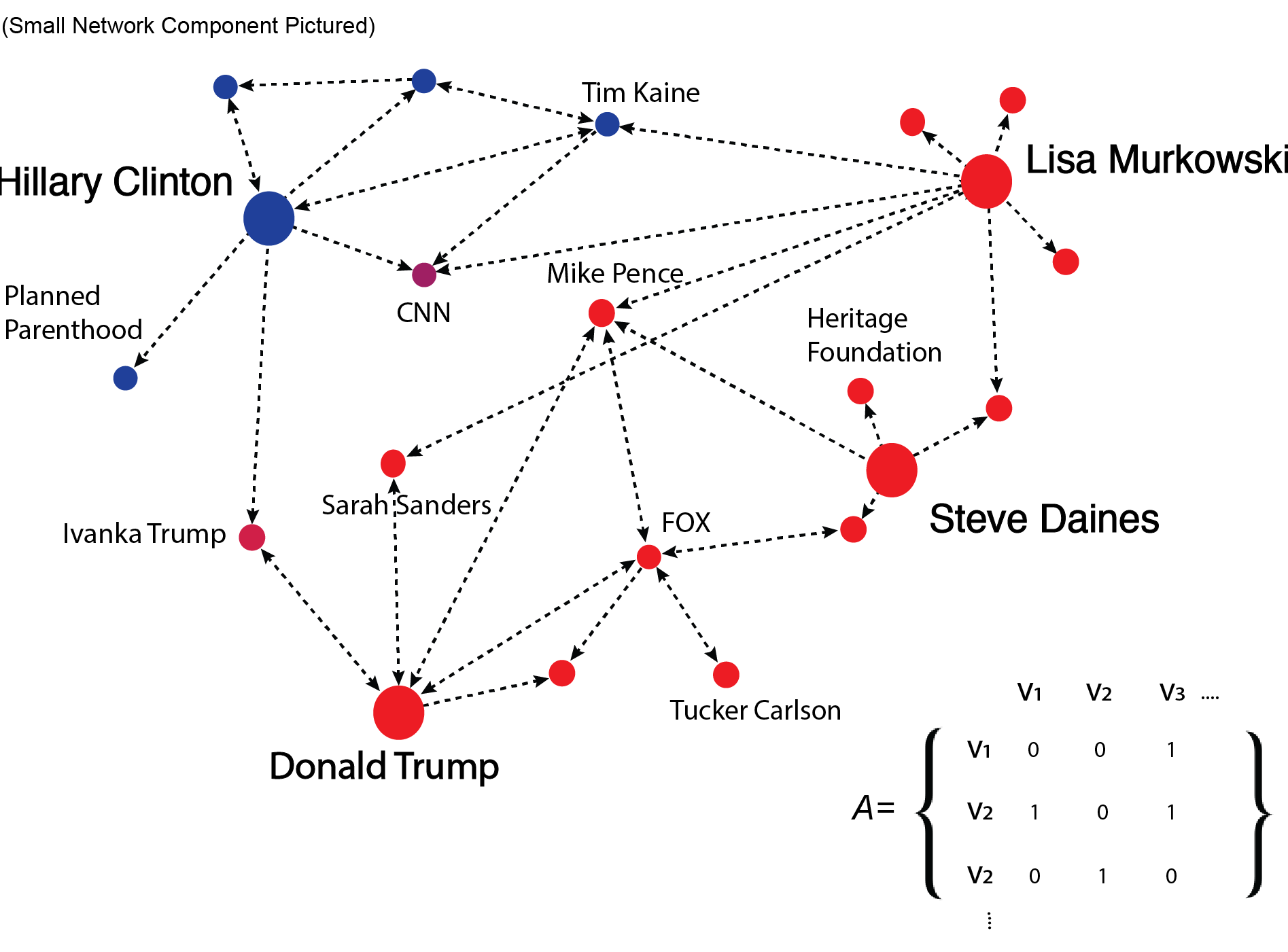

3. Captures Social relationships

Weakness of Digital Trace Data

Despite the considerable advantages of digital trace data, they also create a range of challenges for empirical observation and causal inference

1. Inaccessible

Weakness of Digital Trace Data (Cont.)

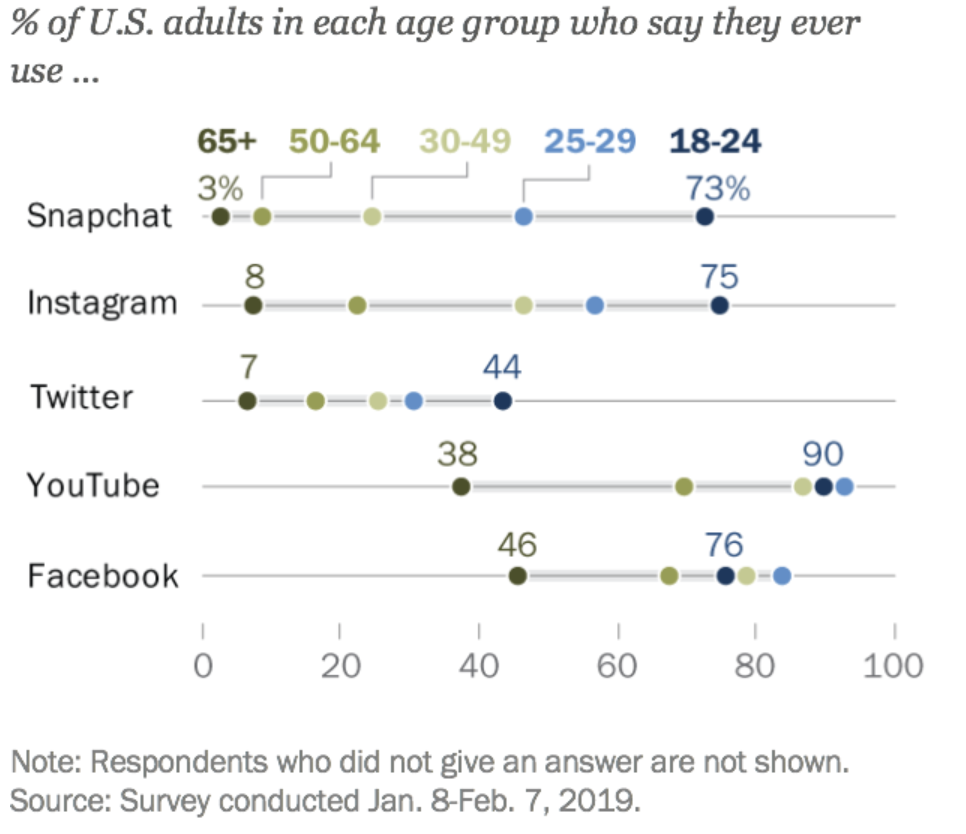

2. Non-Representative

Weakness of Digital Trace Data (Cont.)

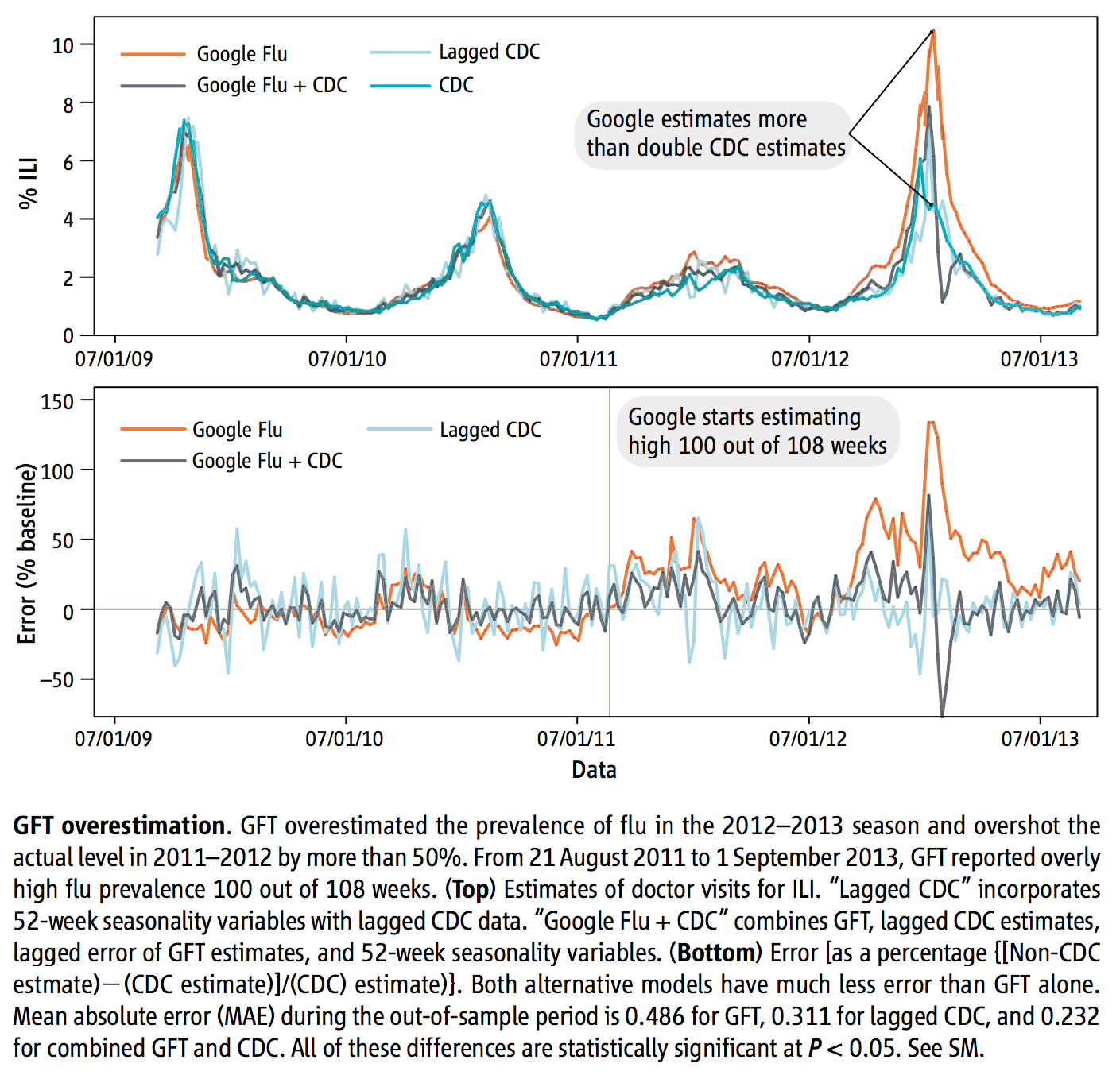

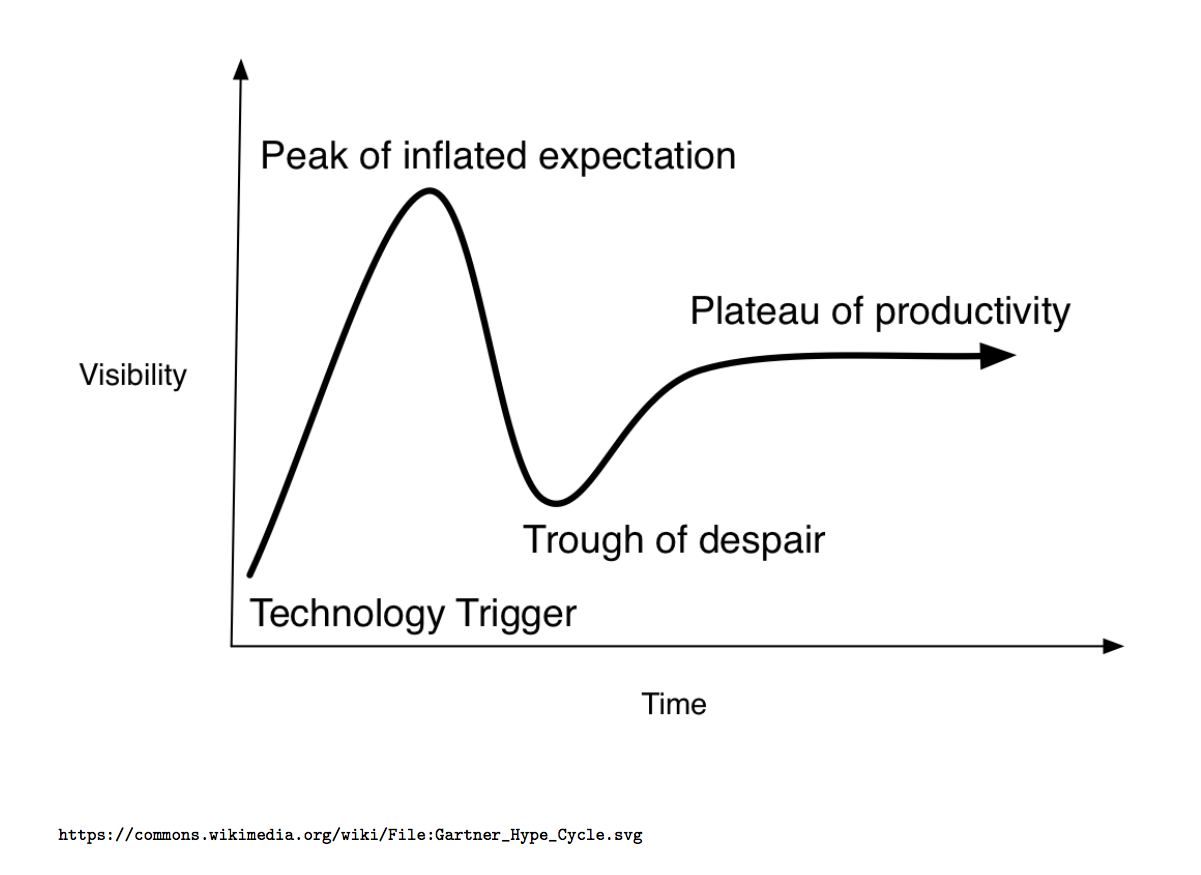

3. Drifting

Weakness of Digital Trace Data (Cont.)

4. Algorithmic Counfounding

Weakness of Digital Trace Data (Cont.)

5. Unstructured

Weakness of Digital Trace Data (Cont.)

6.Sensitive

Weakness of Digital Trace Data (Cont.)

7. Incomplete

Weakness of Digital Trace Data (Cont.)

8.Elite Bias

You know the famous saying, “history is written by the victors”? Much digital trace data is also created by people who are elites, and who might provide selective or incomplete accounts of what is going on, or worse.

9. Positivity-Bias

Finally, digital trace data often have performative dimensions. Many people do not report negative information about themselves online precisely because they know that their friends, colleagues – or other people they do not know – may be watching them. This creates another common form of bias in social media research.

Future of Digital Trace Data

We are Data

We are filled with data in today’s networked society

through our web activity, we are assigned gender, ethnicity, class, age, education level, and potential status of parent with x no. of children (digital trace data/digital footprint/digital breadcrumbs)

if internet metadata identifies a user as foreigner than they lose right to privacy afforded to U.S. citizens

who would have thought that class status, citizenship, ethnicity could be algorithmically understood?

John Cheney-Lippold. (2017). We are Data: algorithms and the making of our digital selves. New York University Press.

We are Data (Cont.)

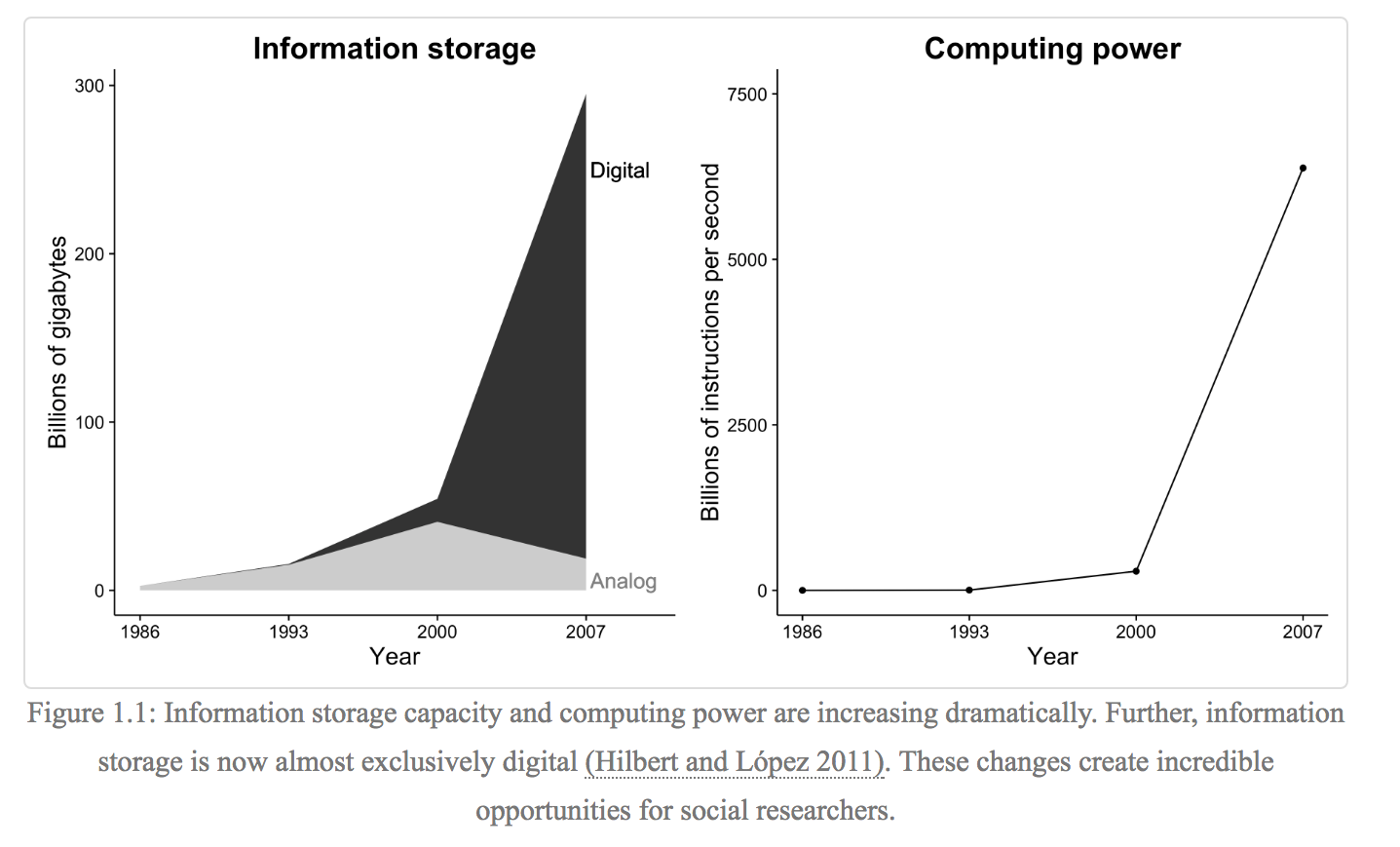

We live in a world of ubiquitous networked communication

- technologies that constituent the Internet are so woven into the fabric of our daily lives, where for most of us, existing without seems unimaginable

We also live in a world of ubiquitous surveillance

- same technologies have helped spawn an impressive network of governmental, commercial, and unaffiliated infrastructures of mass observation and control

- most of what we do in this world has at least the capacity to be observed, recorded, analyzed, and stored in a databank

- HOW?

- storage is cheap

- computers are fast to analyze information in both real time & retrospective

- our daily activities that are mediated with software can be easily configured to record and report everything it sees upstream

- HOW?

John Cheney-Lippold. (2017). We are Data: algorithms and the making of our digital selves. New York University Press.

We are Data (Cont.)

We call people ‘terrorists’ based on metadata; We kill people based on metadata

data-based attack is a ‘signature strike’

- a strike that requires no ‘target identification’ but rather an identification of groups of men who bear certain signatures or defining characteristics associated with terrorists activity but whose identities are unknown

US drone program in early 2000s, strikes were “targeted”

US does not publicly differentiate between its “targeted” and “signature” strikes

- shift in spike in frequency of drone attacks from 49 between 2004 and 2008 to 372 between 2009 and 2015

John Cheney-Lippold. (2017). We are Data: algorithms and the making of our digital selves. New York University Press.

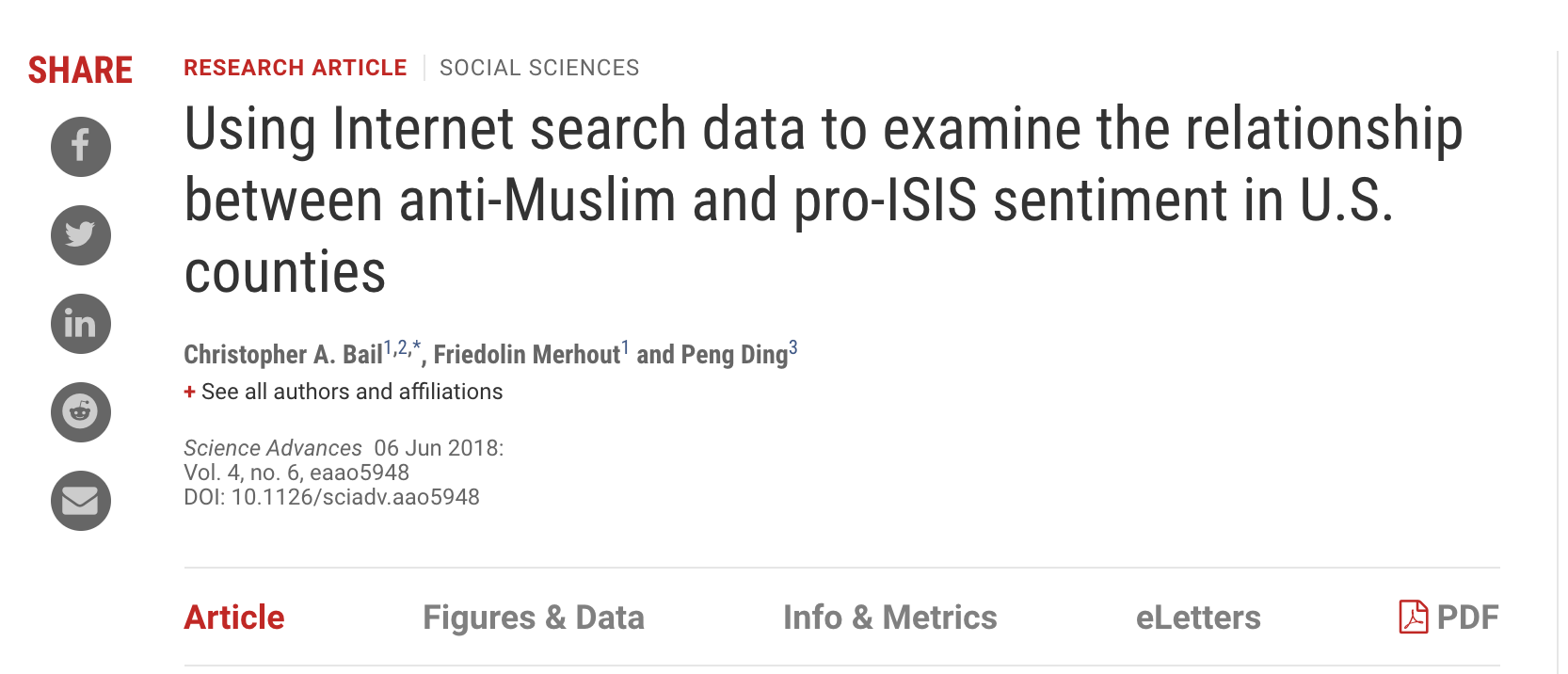

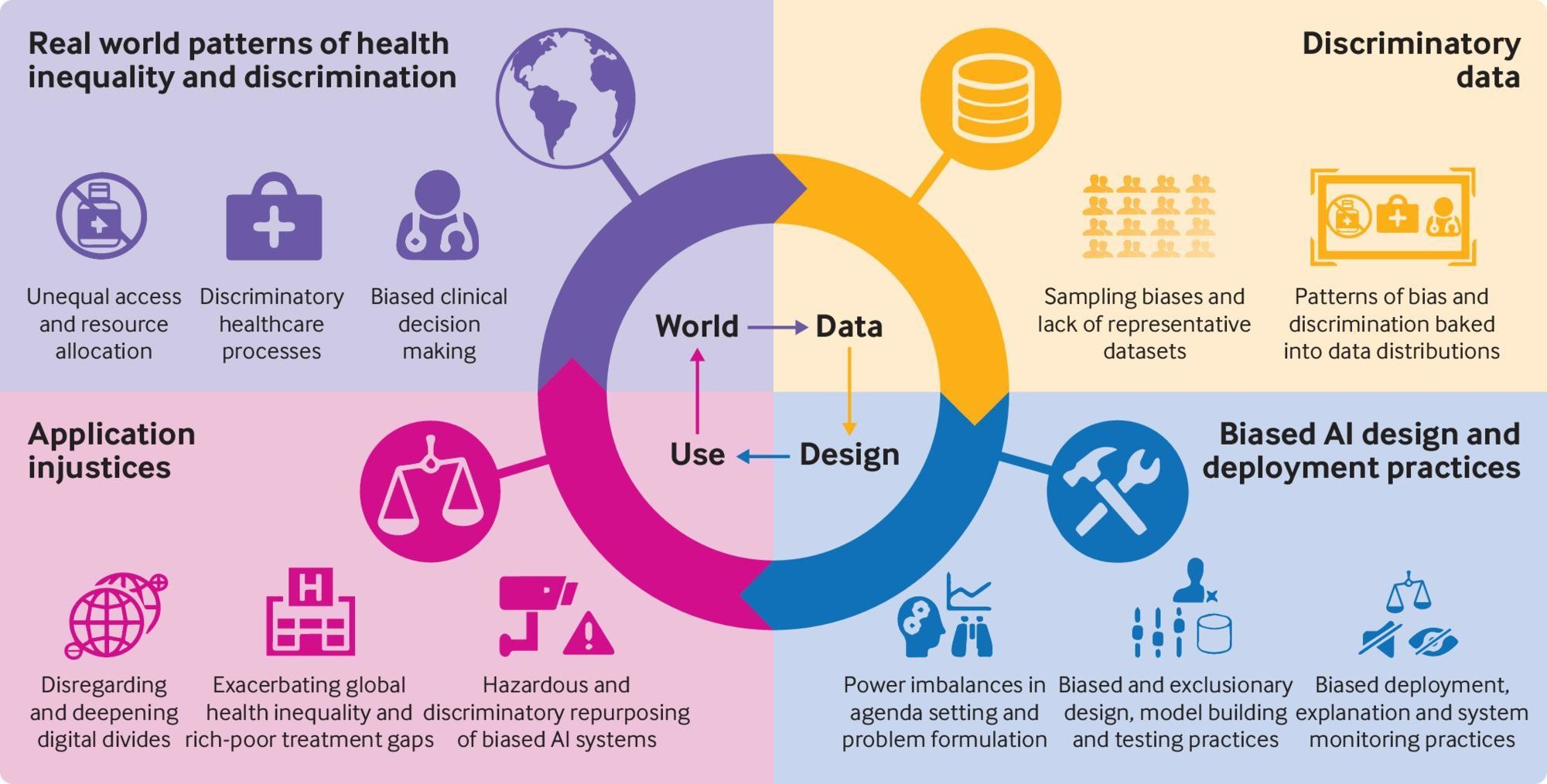

Algorithmic Confounding/Biasness

It occurs when a computer system reflects the implicit values of the humans who are involved in collecting, selecting, or using data

Algorithmic Confounding/Biasness (Cont.)

- Algorithms might disseminate social biases against certain groups of sociodemographic factors (such as race, gender, geography)

- The output of these algorithms is primarily dependent on the annotated datasets and is sensitive to social bias created by humans

- An algorithm that uses both text and metadata to learn is likely to be highly biased as metadata consists of the author’s nationality, discipline, etc., when compared to an algorithm with text-only data

- Even with text-only data, algorithms will still learn bias due to the language problems generated by second-order effects for text-based machine learning

- Additionally, when using chatbots (such as ChatGPT) to provide realtime recommendations, the dialogue of chatbot can be modelled with available metadata to adjust the features of the replier in terms of gender, age, and mood

(Metaphors in HCI)

Lamba, M., Madhusudhan, M. (2022). Text Data and Mining Ethics. In: Text Mining for Information Professionals. Springer, Cham. https://doi.org/10.1007/978-3-030-85085-2_11

Ways to Mitigate Biases

- Understanding how the data was generated

- Using tools that identify bias in models and algorithms such as

FairML,IBM AI Fairness 360,Accenture’s “Teach and Test” Methodology,Google’s What-If Tool, andMicrosoft’s Fairlearn - Making the data, process, and outcome open, thus making it transparent and helping us to judge

- Creating algorithms and standards that can be adapted from one application to another

- Following the set of standards proposed by the Association for Computing Machinery US Public Policy Council and applying them at every stage in the algorithm creation process

- Enforcing accountability in policies during auditing in pre-and post-processing as well as standardized assessment as algorithms do not make mistakes, but humans do

Lamba, M., Madhusudhan, M. (2022). Text Data and Mining Ethics. In: Text Mining for Information Professionals. Springer, Cham. https://doi.org/10.1007/978-3-030-85085-2_11

What Constitutes Research Data?

University: “Material or information on which an argument, theory, test or hypothesis, or another research output is based” (Queensland > University of Technology. Manual of Procedures and Policies. Section > 2.8.3.)

Digital Project Management: “What constitutes such data will be determined by the community of interest through the process of peer review and program management. This may include, but is not limited to: data, publications, samples, physical collections, software and models” (Marieke Guy)

Government Institution: “Research data is defined as the recorded factual material commonly accepted in the scientific community as necessary to validate research findings, but not any of the following: preliminary analyses, drafts of scientific papers, plans for future research, peer reviews, or communications with colleagues” (OMB-110, Subpart C, section 36, (d) (i))

Data Science: “The short answer is that we can’t always trust empirical measures at face value: data is always biased, measurements always contain errors, systems always have confounders, and people always make assumptions” (Angela Bassa)

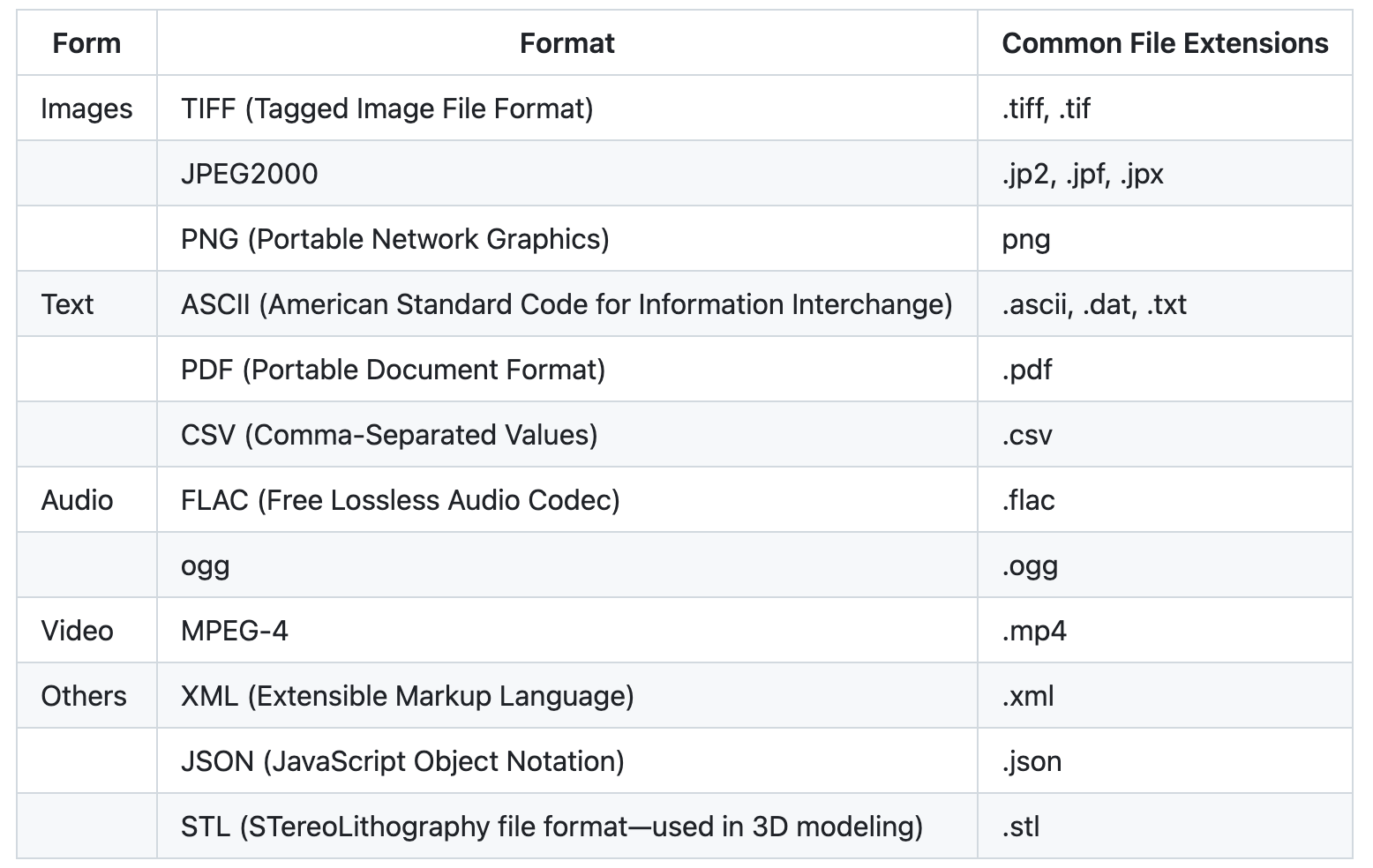

Forms of Data

A small list of open multimedia formats. For a list of file formats, consider checking out the Library of Congress’ list of Sustainability of Digital Formats.

Importance of Using Open Data Formats

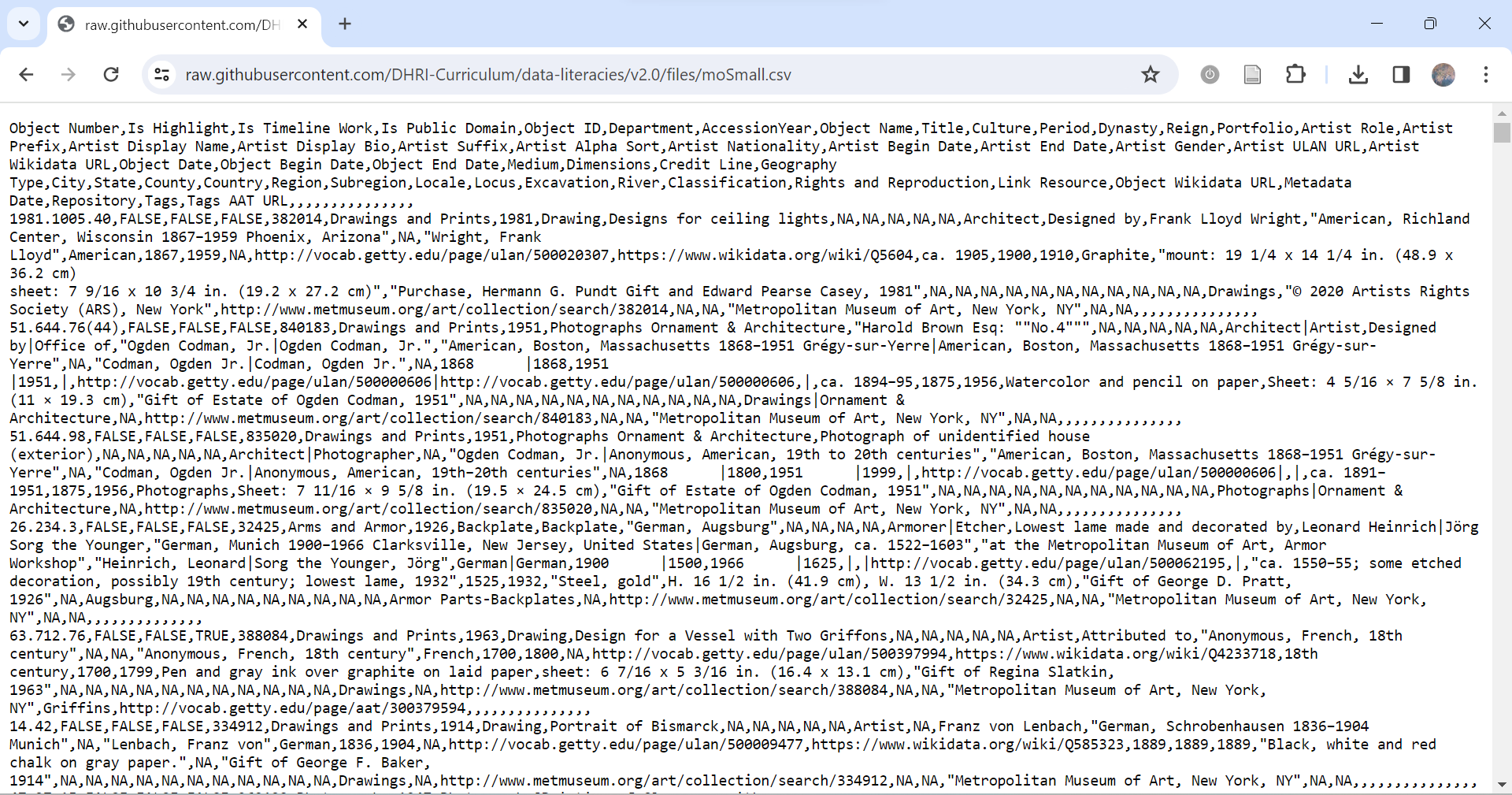

A screenshot of a dataset as a cvs file, uncompressed, and follow an open standard

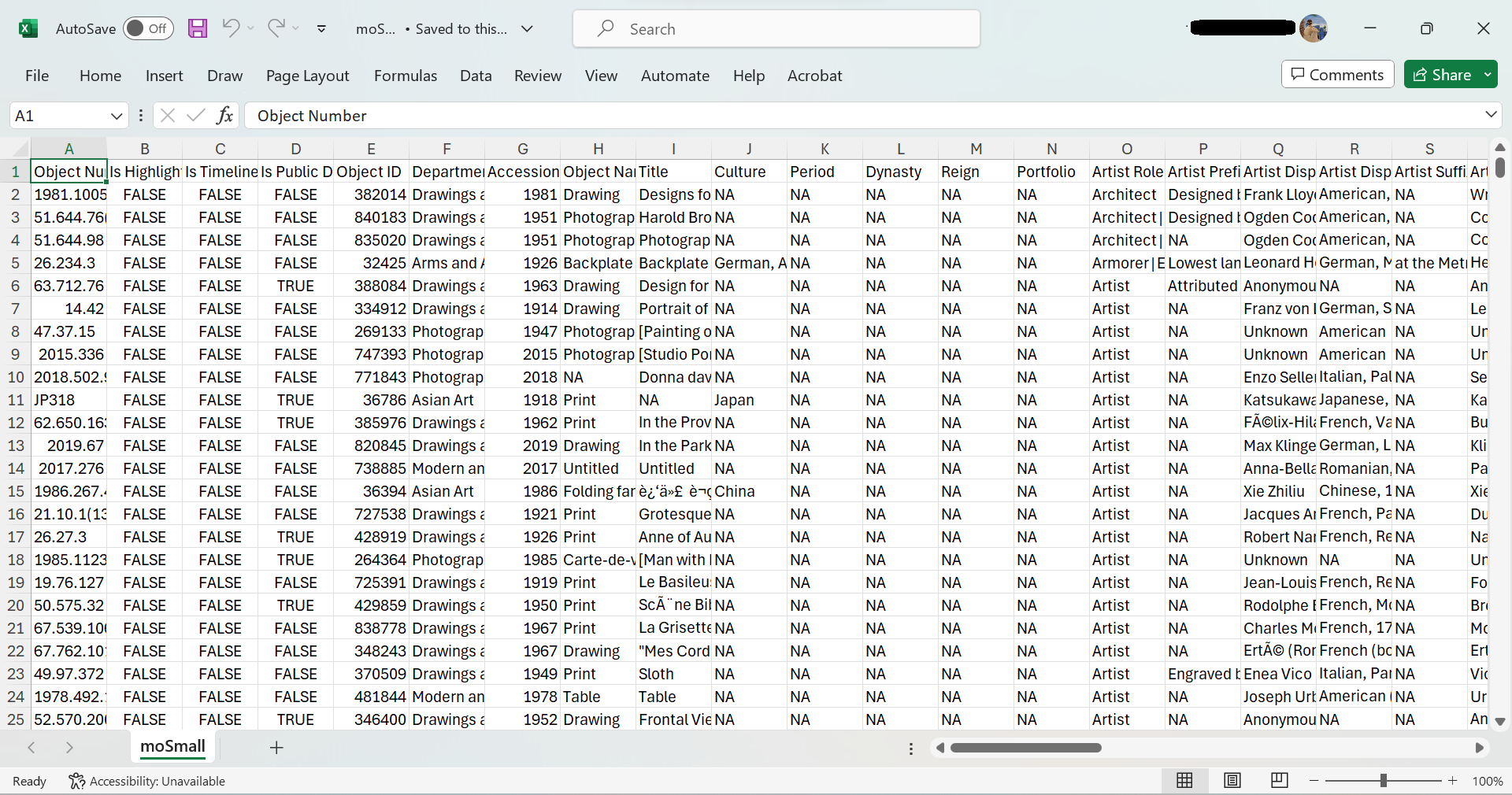

A screenshot of the same dataset in an Excel file (.xlsx). Unlike the previous image, this is a proprietary format

Challenge: Forms of Data

As you inspect the information present in each image, consider these questions:

What are some forms of data used in the project?What are some forms of data outputted by the project?Where was the data retrieved from to complete the project?

Human Computers at NASA is an archival project that “seeks to shed light on the buried stories of African American women with math and science degrees who began working at NACA (now NASA) in 1943 in secret, segregated facilities.”

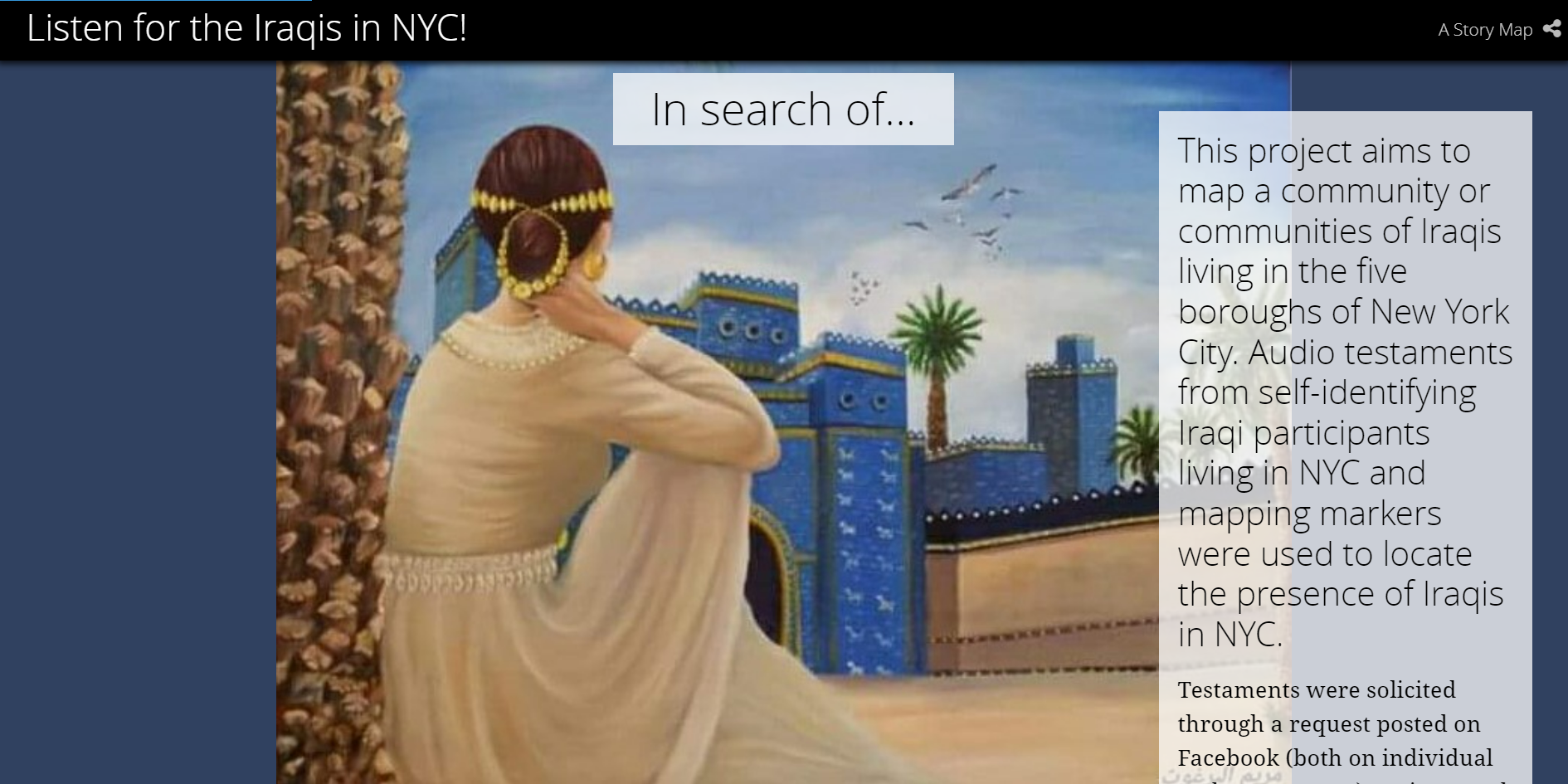

Listen for the Iraqis in NYC! is an audio community mapping project that seeks to locate the Iraqi population in NYC using their own voices.

Institutional Compliance for Data and Research

The Institutional Review Board (IRB) is a floor for ethical responsibility at universities that came to pass after outrage about horrific unethical research studies done on people. A prime example of these grotesque studies is the Tuskegee Syphilis Study (1932-1972).

Born from concerns of the ethical choices made in biomedical and behavioral research, IRB compliance is not broadly applicable.

This leaves holes in institutional ethical regulations and requires researches in other fields, such as the social sciences, to find other ethical regulations or devise field specific ethical considers.

When is an IRB required?

Usually, IRB review is required when ALL of the criteria below are met:

The investigator is conducting research or clinical investigation,

The proposed research or clinical investigation involves human subjects, and

The university or research institution is engaged in the research or clinical investigation involving human subjects.

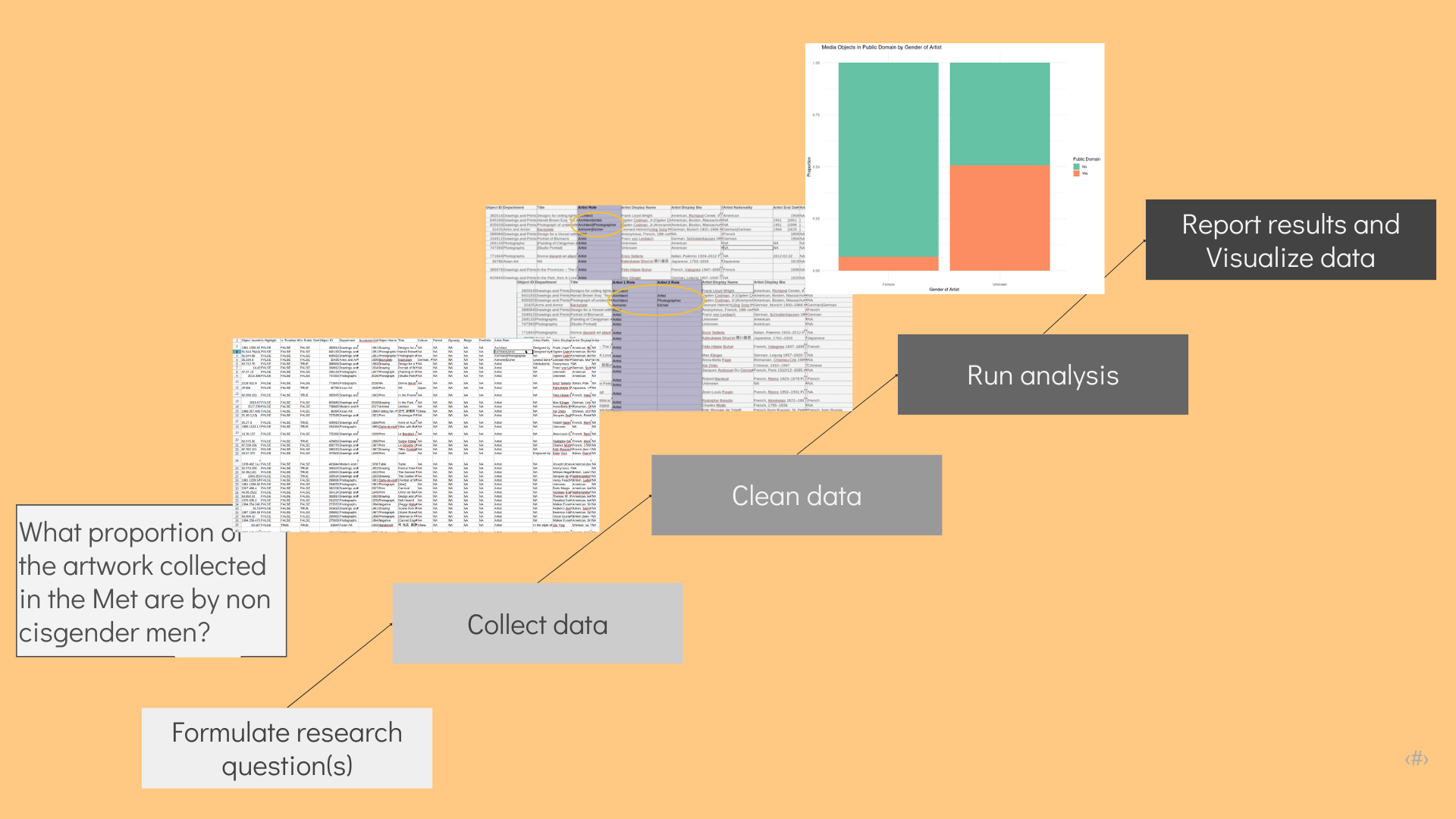

Stages of Data

Stages of Data: Non-Linear

Issues: During the Research Cycle

Ensuringgood, useful dataOrganizingandStructuringdata for the userStoringthe dataDocumentingandDescribingthe data- Supporting the

Analysisof the data Publishingdata setsSharingthe data and results

Issues: After the Research Cycle

CuratingandPreservinggood, useful dataPreparingthe data for the userIngestingandStoringthe dataEnsuring privacyandsecuringthe dataRe-usingthe data

Different Ways to Get Data

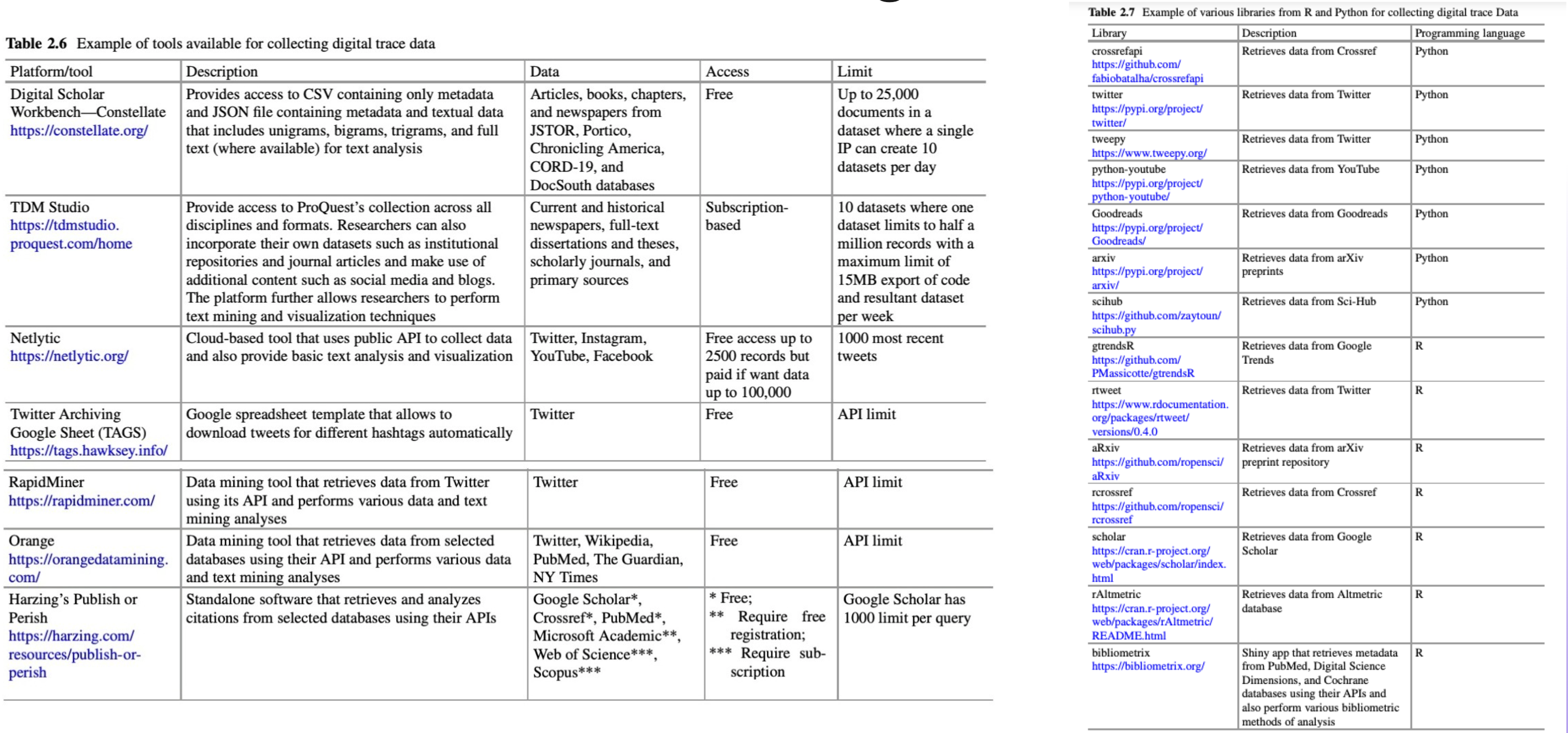

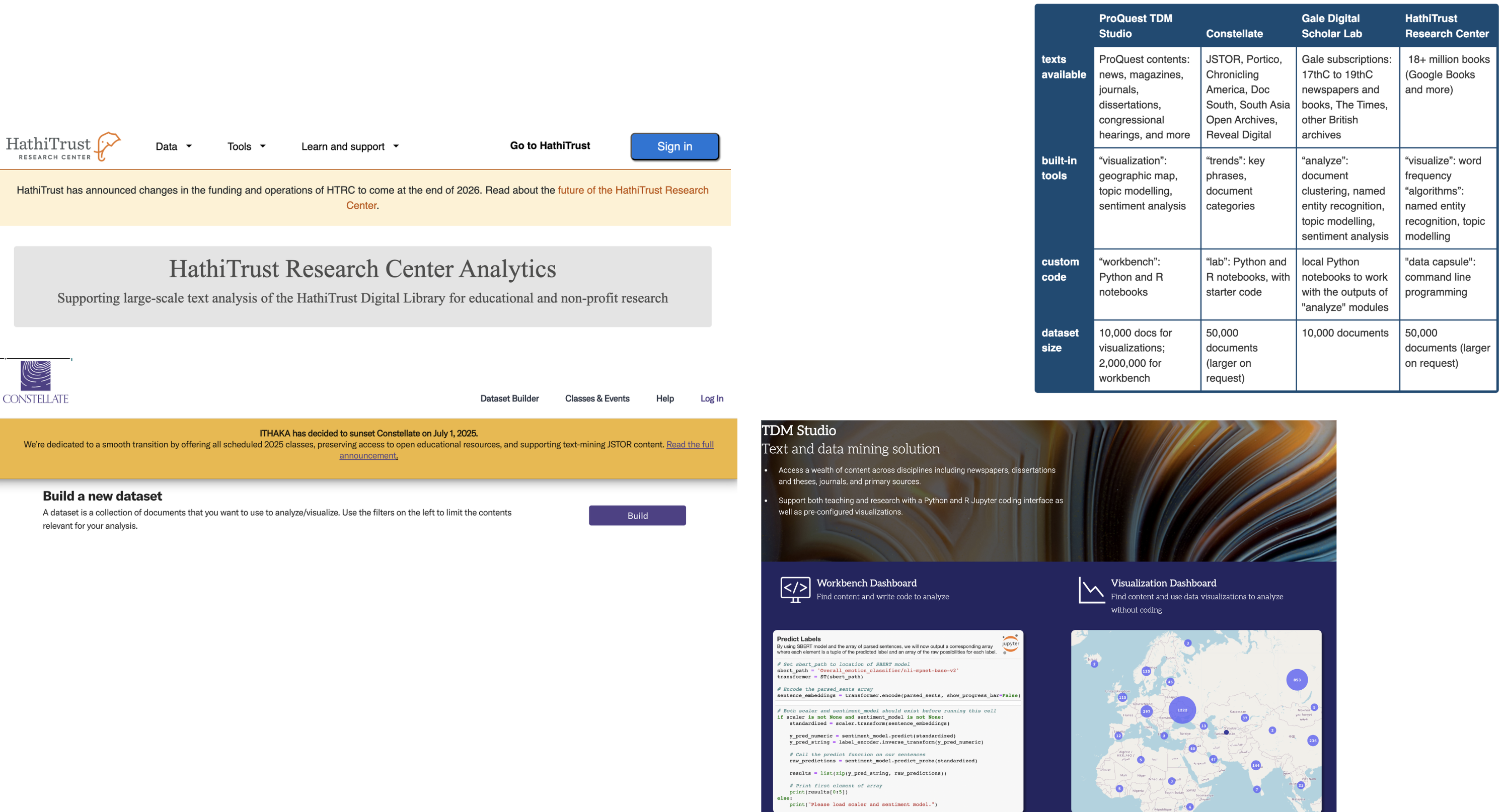

Text Mining Platforms

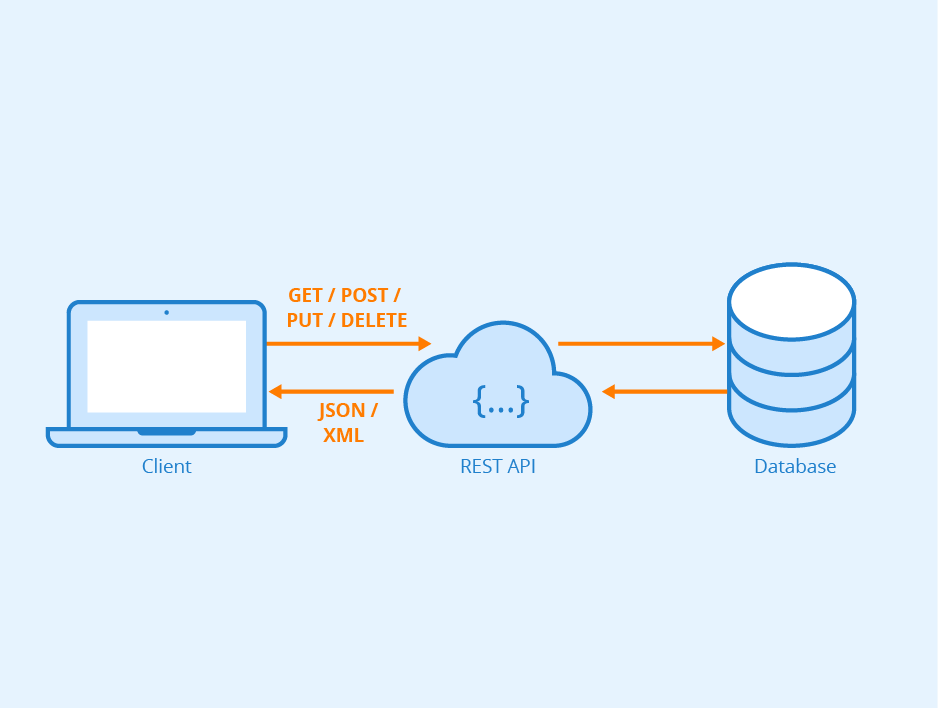

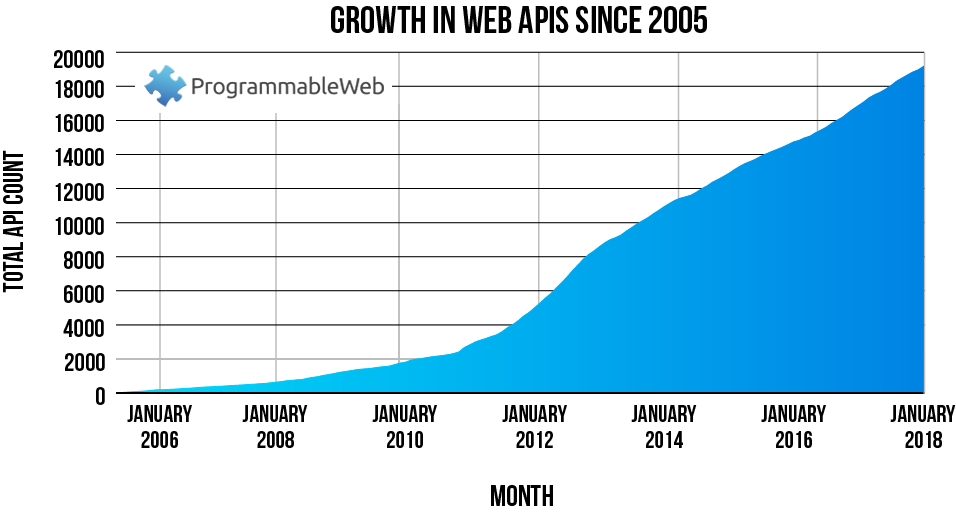

What is an Application Programming Interface?

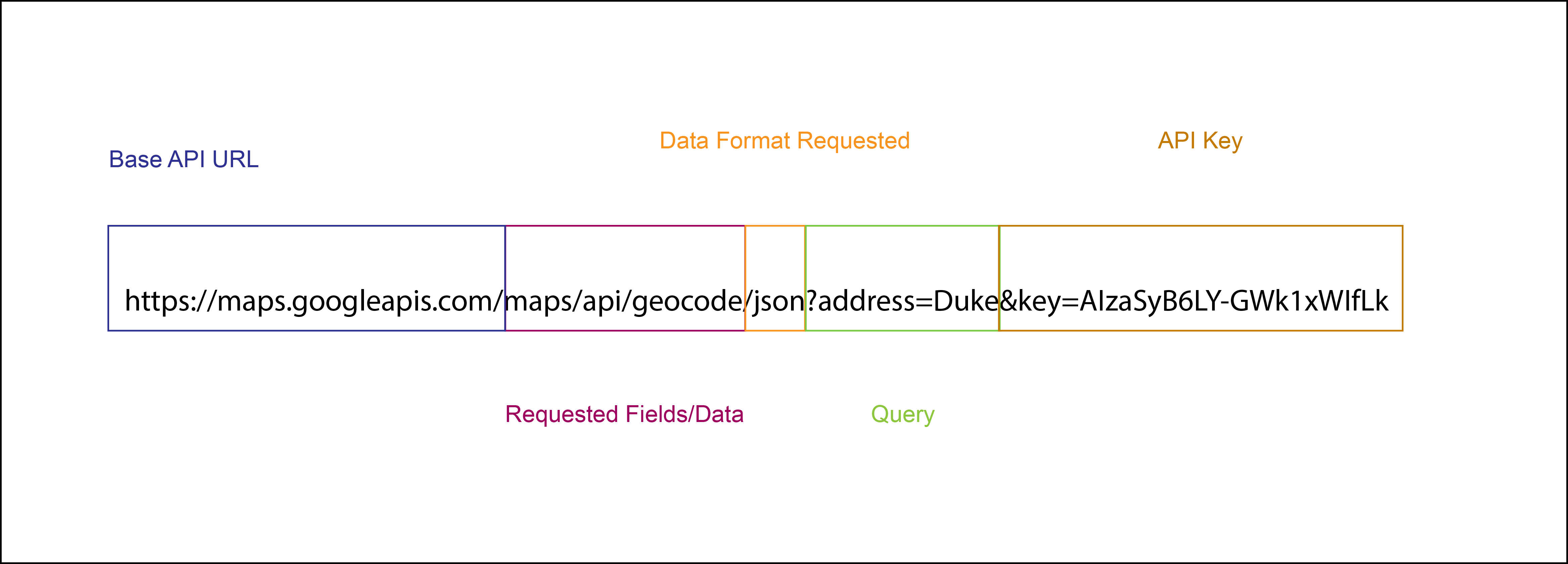

How Does an API Work?

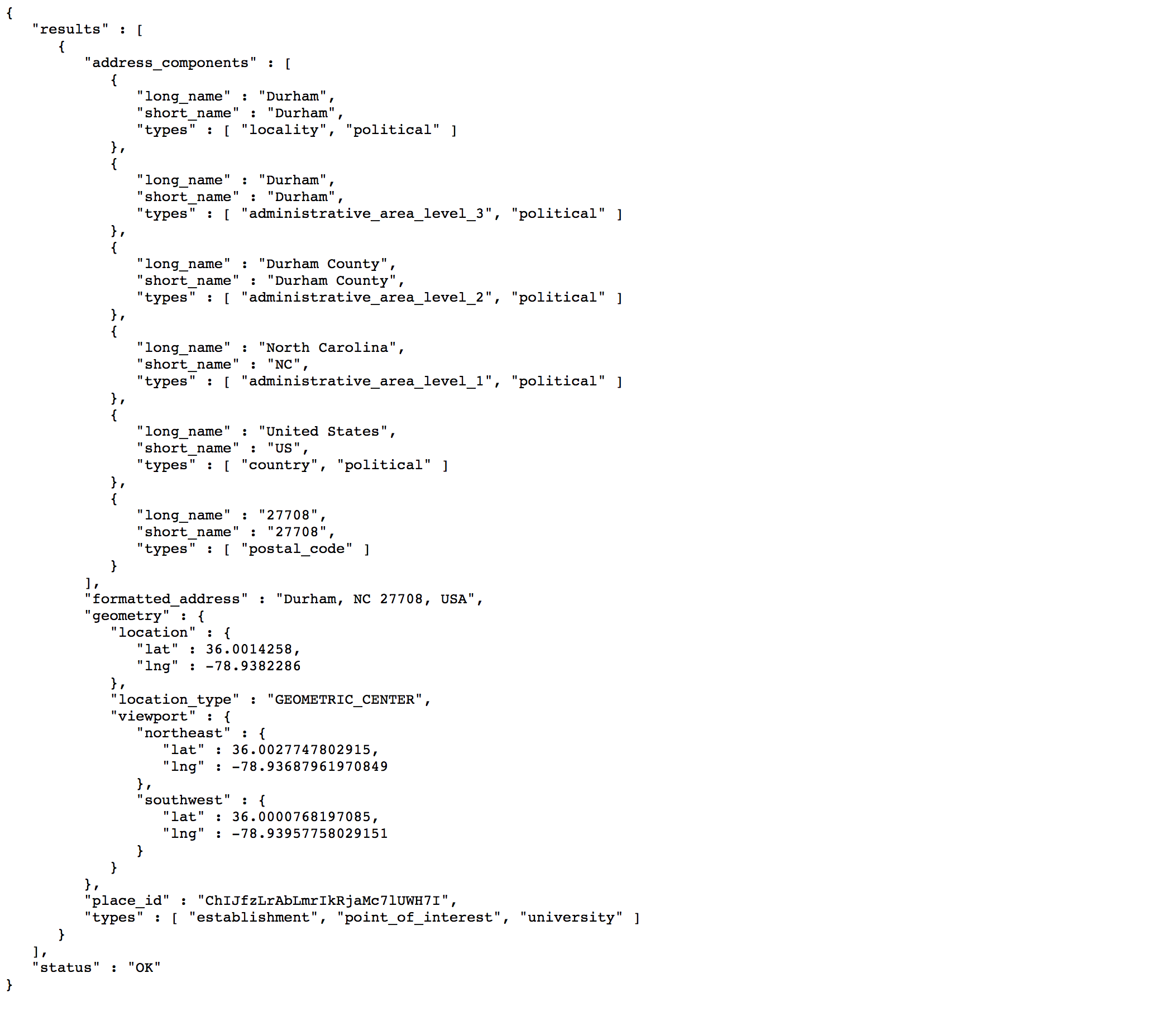

Output of API Call

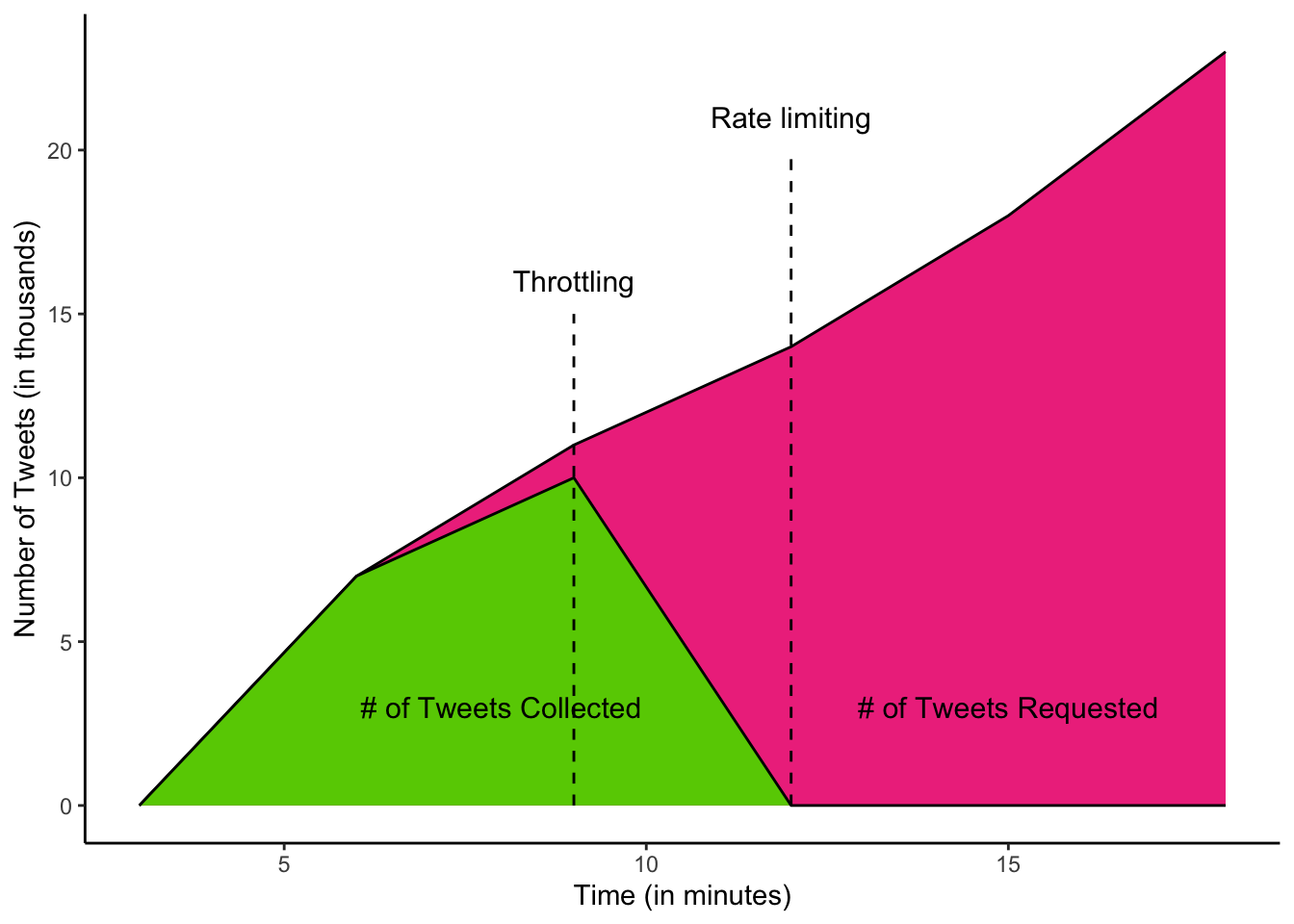

Rate Limiting

Challenges of Working with APIs

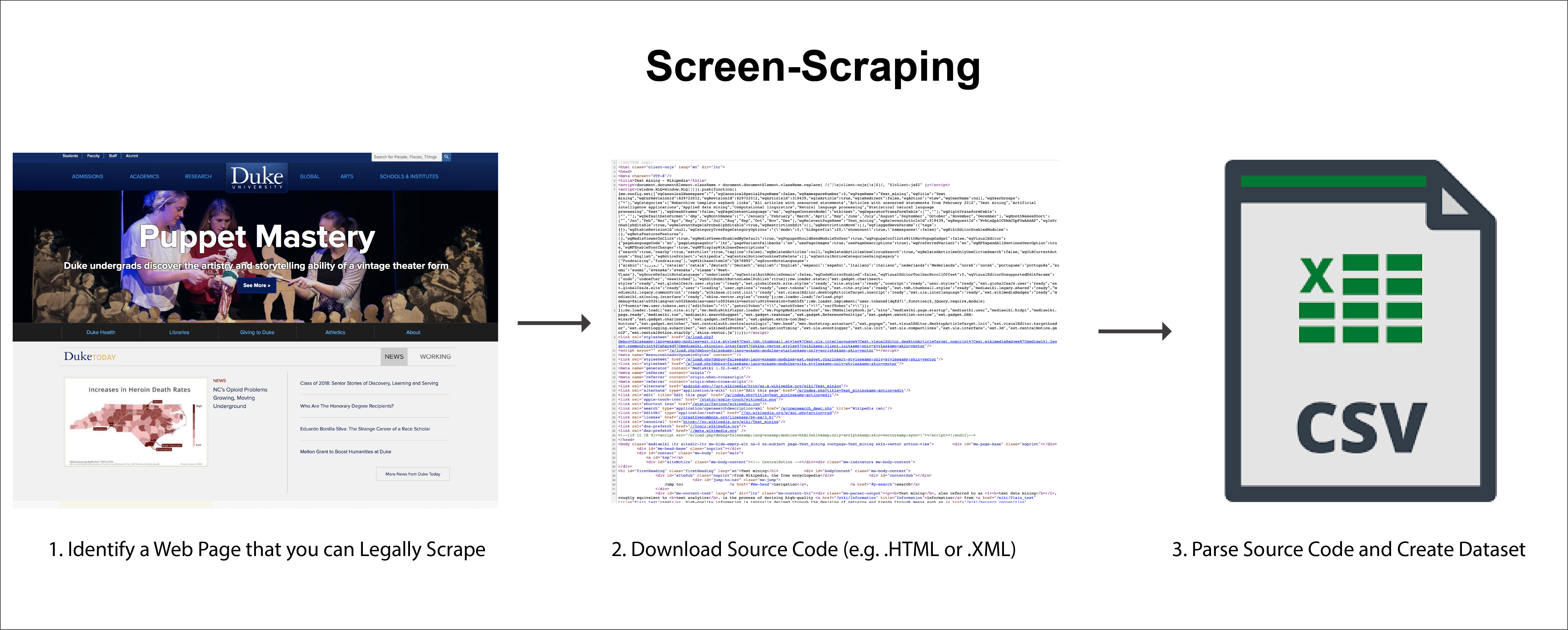

Screen-Scraping or Web Scraping

Is Screen-Scraping Legal?

So… When Should I Use Screen-Scraping?